How to Modernize Your Intrusion Detection System with AI and Autonomous Agents

Introduction

Traditional signature-based intrusion detection systems have long relied on known patterns—they knew exactly what they were looking for. But as threats evolve, the question has shifted from “does this match a known pattern?” to “does this actually make sense in context?” This guide will walk you through the steps to evolve your intrusion detection architecture by integrating machine learning (SnortML, etc.) and agentic AI, enabling your sensors to think rather than just match.

What You Need

- An existing signature-based intrusion detection system (e.g., Snort, Suricata)

- Access to historical network traffic logs (pcap files or metadata)

- A machine learning framework (TensorFlow, PyTorch, or Scikit-learn)

- Computing resources capable of running ML inference (GPU recommended)

- Basic knowledge of Python and network protocols

- Agentic AI framework or platform (e.g., custom agents using LangChain or AWS Bedrock)

- Test environment for validation (avoid production during transition)

Step-by-Step Guide

Evaluate Your Current Signature-Based Setup

Before adding AI, audit your existing IDS. List all signatures, rules, and alerts. Identify which behaviors are captured by patterns and which are missed (e.g., zero-day attacks, encrypted threats). This baseline helps you measure improvement.

Collect and Prepare Training Data

Gather historical network traffic that includes both benign and malicious samples. Label the data if possible; otherwise, use unsupervised methods. Preprocess features: packet sizes, inter-arrival times, protocol headers, flow durations, and payload entropy. Normalize and split into training/validation sets.

Train an Initial Machine Learning Model

Use your ML framework to train a classifier (e.g., decision tree, neural network) that outputs a probability of maliciousness. The goal is to move from pattern matching to statistical anomaly detection. For example, train a model to flag traffic that deviates from normal baselines. Validate accuracy against your known attacks.

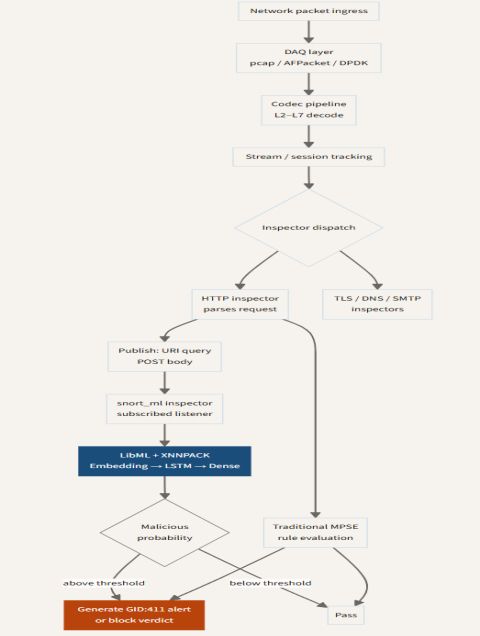

Integrate ML Inference into Your IDS Pipeline

Modify your IDS configuration to send suspicious packets or flow data to the ML model as a second stage. In Snort, this can be done via the SnortML plugin or external preprocessing. Ensure low latency—consider caching results for repeated patterns. Tune the threshold to balance false positives and false negatives.

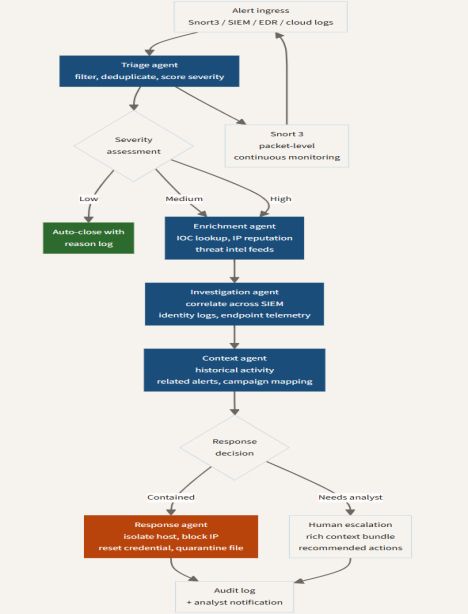

Design an Autonomous Agent for Contextual Analysis

Build or deploy an agentic AI that can reason about alerts beyond the model’s output. The agent should ingest alerts, query external threat intelligence, correlate with network topology, and ask: “Does this event make sense in this context?” For example, an agent might ignore an alert about SSH brute force if it came from a trusted admin workstation during a scheduled maintenance window.

Source: stackoverflow.blog Hook the Agent to Respond Automatically

Configure the agent to perform actions based on its analysis: generate enriched tickets, block IPs, or adjust firewall rules. This step is crucial—agentic AI moves from detection to autonomous response. Use a sandbox or simulator first to avoid unintended disruptions. Monitor agent decisions for a period before full delegation.

Iterate and Fine-Tune the Hybrid System

Continuously review false positives/negatives generated by both the ML model and agent. Retrain models with new data, update agent reasoning rules, and refine thresholds. Document lessons learned to improve the collective intelligence of your sensor.

Tips for Success

- Start small: Run the ML model in parallel with your signature system for a month—compare alerts without breaking existing workflow.

- Context is king: Even the best ML model can misfire without understanding business logic. Agentic AI shines when it can incorporate organizational context.

- Explainability matters: Use methods like SHAP or LIME to understand why the ML model flagged an event. This builds trust with analysts.

- Keep signatures as a safety net: Don’t discard signatures entirely—they’re still fast and reliable for known threats. Treat ML and agents as complementary layers.

- Monitor agent behavior: Autonomous agents can become too aggressive or passive. Set up dashboards to track their actions and override if necessary.

- Plan for scaling: As traffic grows, distributed inference and federated learning can help maintain performance. Think about cloud or edge deployment early.

By following these steps, you’ll transform your intrusion detection from a static pattern matcher to an adaptive, thinking sensor—able to ask “does this make sense?” rather than just “have I seen this before?” Embrace the evolution.

Related Articles

- How to Kickstart a Successful Personalization Strategy with a Prepersonalization Workshop

- AI Set to Fuel Software Development Boom, Not Bust, Experts Say

- North Korean Hackers Weaponize AI Coding Agents in New Supply-Chain Attack Campaign

- Revive Your Retired Phone: The Ultimate Smart Home Upgrade You Didn't Know You Had

- 10 Revolutionary Facts About Building Homes with Robot Inchworms and Giant LEGO Bricks

- Enterprise AI Takes Action: NVIDIA and ServiceNow Unite for Autonomous Agents

- Mastering Google Home’s Gemini AI: A Guide to Advanced Multi-Step Commands and Event Management

- 10 Crucial Insights into Bionic Technology's Real-World Performance