Why Data Quality Is the Make-or-Break Factor for AI Success (From ML to Agentic Systems)

The Hidden Cost of Sloppy Data in AI

No team sets out to build a flawed model. Yet time and again, AI projects fail—not because of bad algorithms, but because the data they relied on was never as clean as it seemed. The symptoms are familiar: a pricing model quietly causes a $2.3 million margin shortfall, a customer-facing chatbot delivers confident but dangerously wrong answers, or an autonomous agent commits budget on incomplete supplier data. These aren't edge cases; they are the most common reasons AI initiatives stall, drift, or die silently in production. Traditional machine learning has long wrestled with data quality, but the rise of generative AI and agentic AI amplifies the stakes dramatically.

Traditional Machine Learning: Visible Failures, Contained Damage

With classical ML models, data quality issues usually produce visible signals. A dashboard shows an unexpected number, an analyst catches the discrepancy, and the model gets retrained. The damage is often contained because the model's output is a prediction—a number, a classification, a score—that someone can review before acting on it. The relationship between data quality and ML has been manageable because failures manifest in ways that are traceable, even if they are costly.

Consider a retail pricing model trained on historical sales data that includes a few misrecorded transactions. The resulting predictions might be off by 2–3%, but that margin error can snowball into millions over a quarter. Yet because the model operates within a controlled pipeline, the error is found during validation or by a sharp-eyed analyst. The familiar cycle—spot, flag, fix—works, even if it sometimes comes with a price tag.

Generative AI: Confident Wrong Answers Without Warning

Generative AI shatters that containment. A large language model (LLM) pulling from a stale knowledge base doesn't produce a simple number—it generates a coherent sentence that sounds authoritative. There is no dashboard alert for “confidence in wrong direction.” The chatbot answers a customer's query about return policies using outdated information, and the customer follows that advice. The model operated exactly as designed, on data that was never fit for purpose.

The danger lies in the absence of error signals. Traditional ML models degrade gracefully, with rising error rates that a monitoring system can catch. Generative models, however, can produce perfectly fluent wrong answers with the same high-confidence tone as correct ones. Data quality failures here are qualitative—garbage in, eloquently garbage out—and they are much harder to detect without human review of every output. This is why AI projects relying on generative models see data quality as a critical bottleneck, not just a hygiene factor.

Agentic AI: When AI Acts on Bad Data

The next level of risk comes with agentic AI—systems that don't just generate text but take actions in the world. An autonomous procurement agent, for example, might scan supplier databases for the best price and automatically commit budget. If the database contains incomplete supplier ratings or missing delivery-time estimates, the agent will act on that partial reality. A bargain price might actually lead to a delayed shipment that costs more in the long run, but by the time anyone reviews the decision, the budget is already spent.

Agentic AI systems operate on a simple truth: they will do exactly what the data tells them to do. There is no human-in-the-loop to question a data anomaly. The tolerance for data quality failures drops to near zero because the cost is immediate and tangible. And unlike traditional ML, the failure is not a prediction that can be ignored—it is an action that has already happened. The further AI moves from prediction to action, the less tolerance there is for data flaws, and the harder those flaws are to catch before they cause damage.

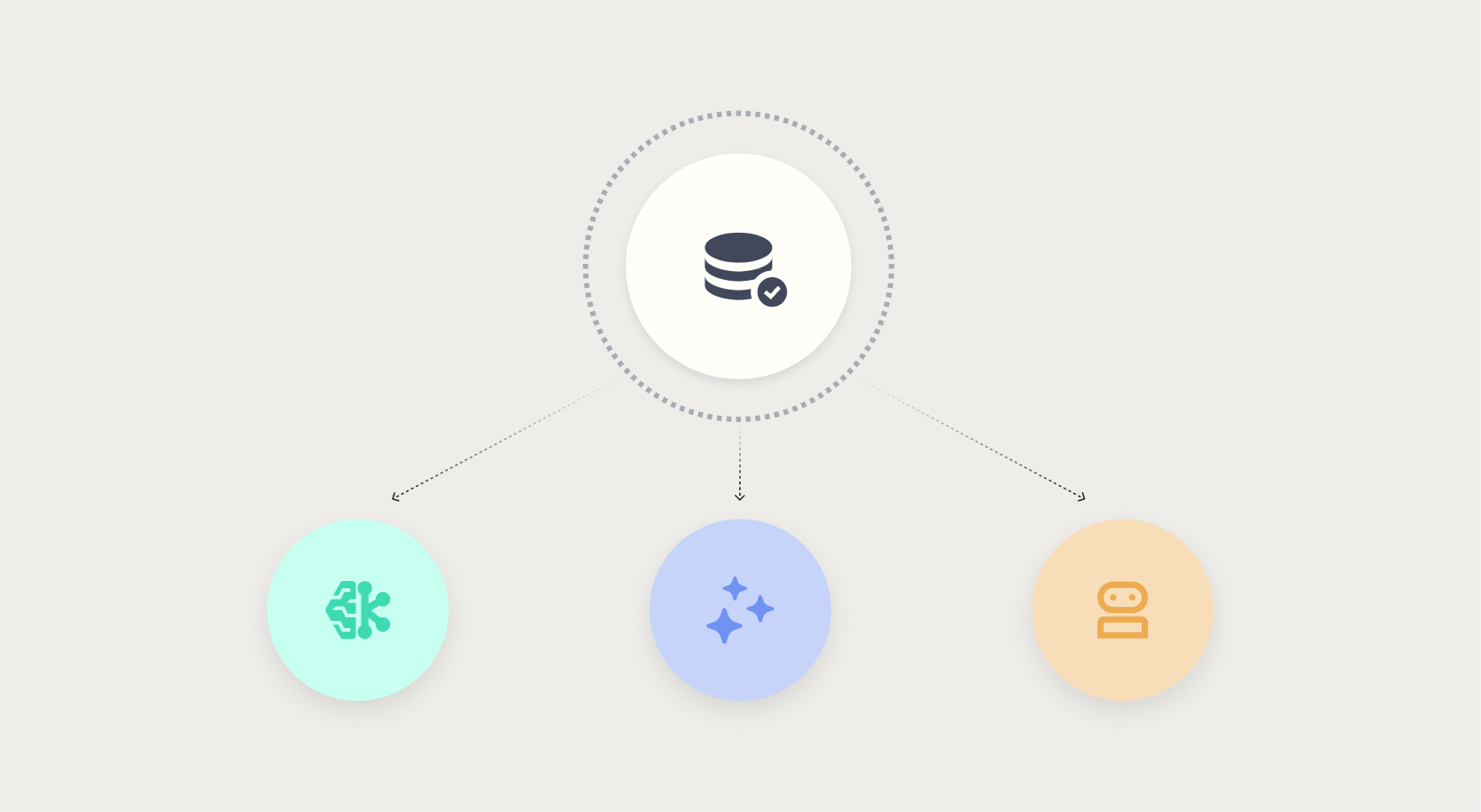

Comparing the Three AI Paradigms

- Traditional ML: Data errors produce visible metric shifts; damage is usually contained and fixable.

- Generative AI: Data errors produce confident wrong text; damage is hard to detect without review.

- Agentic AI: Data errors trigger irreversible actions; damage occurs before any review can happen.

The Bottom Line: Data Quality as a Strategic Imperative

Data quality has always mattered, but the AI landscape is rewriting the consequences of neglect. For traditional ML, poor data meant a wrong prediction that could be corrected. For generative AI, it means confident misinformation that spreads effortlessly. For agentic AI, it means autonomous actions that are costly and irreversible. Organizations that invest in robust data validation pipelines, continuous monitoring, and data lineage tracking will be the ones whose AI projects survive production.

The lesson is clear: no one builds a bad AI model on purpose, but they build them on data that looked clean—until it wasn't. The time to fix data quality is before the model ships, not after the chatbot has misled a thousand customers or the agent has wasted a quarterly budget. As AI evolves, data quality is no longer a data engineering problem—it is a business survival skill.

Related Articles

- Inside a Shahed-136 Drone's Surveillance Camera: A Teardown Analysis

- Bionic Breakthroughs Face Real-World Reality Check: From Lab Demonstrations to Daily Life

- From Lab Demo to Daily Life: A Practical Guide to Evaluating Bionic Technology

- From Lab Marvels to Real-World Tools: The Hard Path for Bionic Technology

- Unlock Your Samsung TV’s Hidden Service Menu: 5 Essential Tweaks

- How Law Enforcement Dismantled Four Major IoT Botnets Behind Record DDoS Attacks

- Tactile Robotics Made Tangible: A Practical Guide to the Daimon-Infinity Dataset

- Uber Unveils Plan to Turn Its Driver Fleet into a Massive Sensor Network for Autonomous Vehicle Development